ServiceNow Workflow Timer Script - X days before/after date

December 14, 2012, 2:03 pm - James Farrer

This has come up a couple of times in the last couple days so I thought I'd post it here for future reference.

When you have a date variable in a catalog item and you need to assign a task or do something X number of days before or after the date then you can use this script in a workflow Timer activity. I made some adjustments to make it easier to put in the number of days. Be sure to replace var_name with the name of the date variable you're using.

// Number of days to wait after the date in var_name (for days before the date, use negative numbers

var number_of_days = 1;

// Calculate x number of days after the due date and convert to seconds

var time = (parseInt(gs.dateDiff(gs.nowDateTime(), current.variable_pool.var_name.getDisplayValue(), true), 10) + (number_of_days * 86400));

// Set 'answer' to the number of seconds this timer should wait

answer = time;

Javascript Date Difference (in Days)

December 7, 2012, 9:17 am - James Farrer

I had a client that needed a script to see if a date entered on a form was in the future. I had some code that did it but it was in need of some clean up. I reworked it a little bit and thought I'd post it here for future reference

/**

* Get the number of days difference between the date given and the current date

*

* Example

* If todays date is 2012-12-07 then the following would return 2

* dayDiff("2012-12-09");

*

* Parameter

* date_string - date in the format of yyyy-mm-dd

* Returns

* Number of days between date_string and the current date. Positive numbers are in the future.

*/

function dayDiff(date_string){

// Accepts dates in the form of yyyy-mm-dd, change these next few lines if a different format is needed

var yearfield=date_string.split("-")[0];

var monthfield=date_string.split("-")[1];

var dayfield=date_string.split("-")[2];

// Build the date object

var dayobj = new Date(yearfield, monthfield-1, dayfield);

var val_to_return = false;

// Get today's date and time

var t = new Date();

// We only want the day so create a new date without the time component

var today = new Date(t.getFullYear(), t.getMonth(), t.getDate());

// Calculate the difference

var diff = dayobj - today;

// Convert the difference to days

diff = diff/1000/60/60/24;

return diff;

}

Working with State (or other choice lists) in ServiceNow

October 29, 2012, 12:42 pm - James Farrer

A few days ago I ran into a problem again and in trying to understand it better Mark Sandner and I did some testing and found out how to make changes to the options so that other tables are not affected.

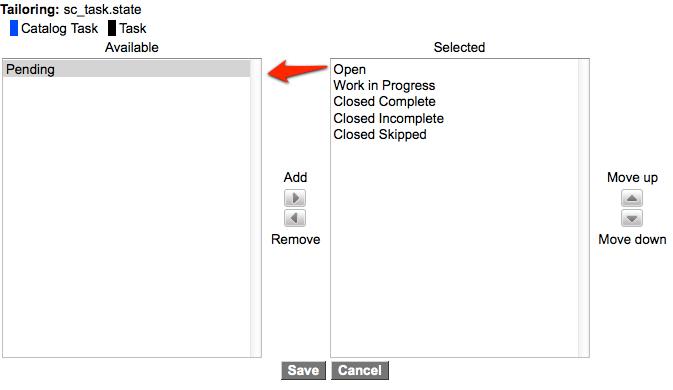

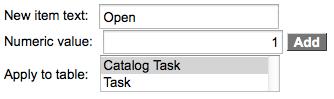

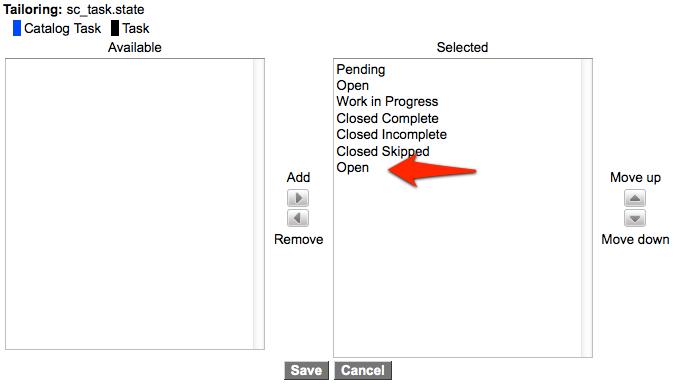

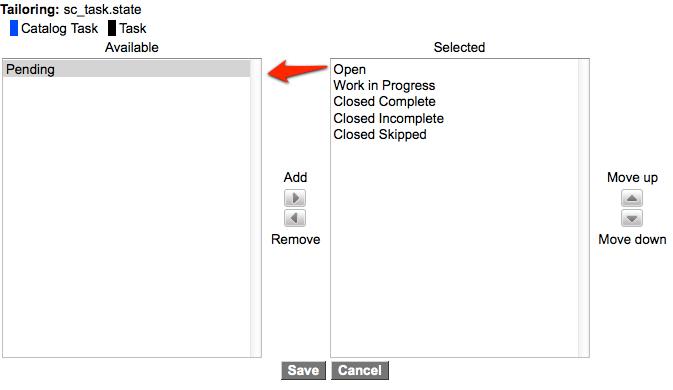

So a little background on what's going on. When working with choice lists (a.k.a. drop-downs) for extended tables the options can be shared from the top down. One of the biggest examples is the State field on the task table. Having this field in common across all task tables makes a ton of sense, but having the values be the same usually doesn't. Sometimes you'll want Work in Progress, sometimes you just want Open. Pending only applies to some circumstances.

The way the choice fields are set up the extended tables can have their own options for the drop-down. The issue is that if you just take the options that are used on the task table and move them over in the sluchbucket then it deactivates the option for all tables that are using it.

The good news is there's an easy way to work around this issue.

If before deactivating anything you first add a duplicate option that is specific to the table, then the system will duplicate all of them, but specific to the table so you can then safely deactivate or change them as needed.

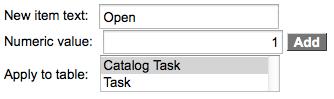

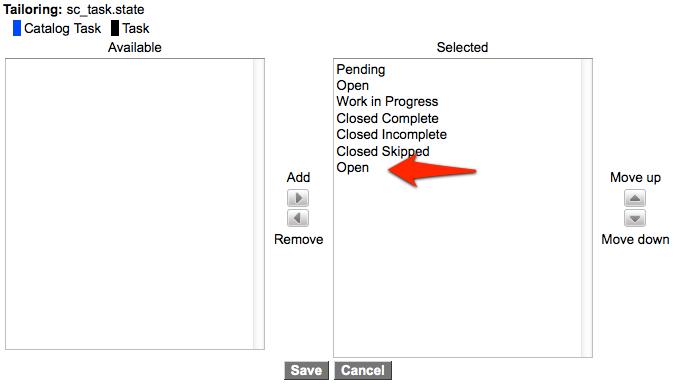

So first add a duplicate option, I used "Open" with the value of 1 like this:

Then save the options with it showing a duplicate.

When you save the changes it will create the duplicates. Then the next time you go to Personalize the choices it will show the same options, but this time they will be specific to the table and you can deactivate them, change them, or add new ones without causing a problem to other tables.

Client Script Messages in ServiceNow

October 23, 2012, 1:44 pm - James Farrer

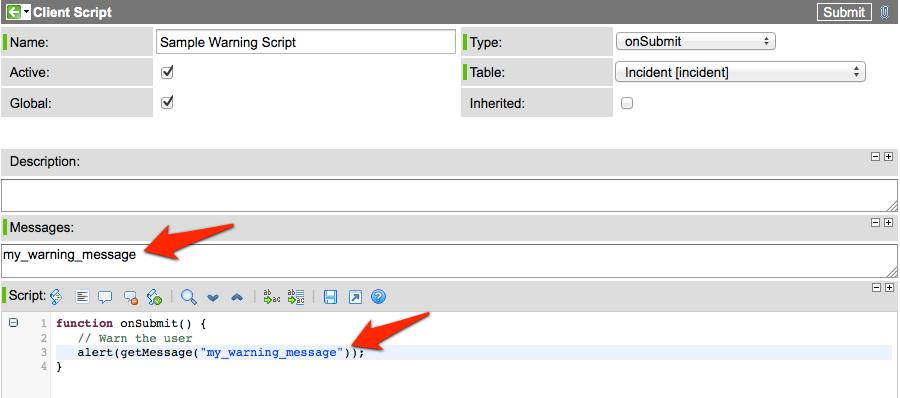

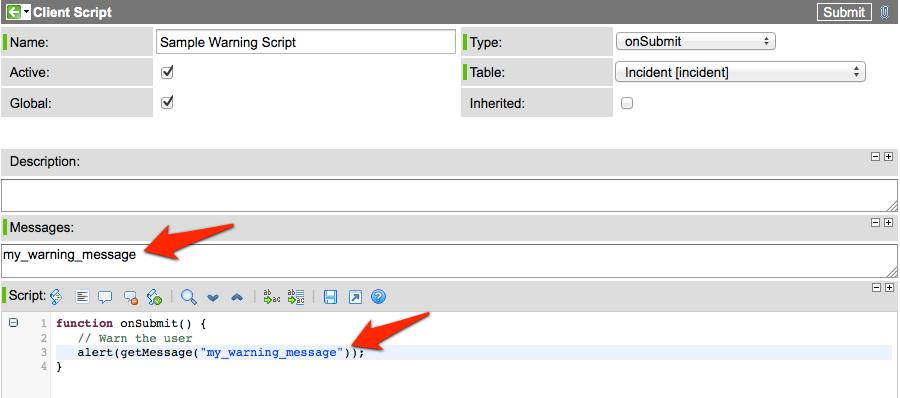

Right now I'm working with a client that has to support two different languages in their ServiceNow instance. Because of this I have been thinking more about using Messages. One area in particular that is relatively new and not often understood is Client Script Messages.

Until late last year javascript alerts and other info or error messages used in client scripts were difficult to translate. Now there is a new field on the Client Script form called Messages. When I first saw this it didn't make a lot of sense to me, but after having used it a little I'm getting to be a big fan. Any time content can be separated from function it typically means less maintenance for the code.

The Messages field provides an easy way to access the System UI -> Messages table in Client Script. On the server side we've been able to do this by calling gs.getMessage("sample message"). Now in a few easy steps in a client script you can do it easily there too.

To set it up you put the key for any message that needs to be displayed in the script into the Messages field (one per line) and then when the form is loading those messages are looked up in the proper language in the Messages table and sent to the client along with the script so they are available. Then in your client script you can just call getMessage('message_key') to use the message.

If you don't want to mess with the Messages table while you're scripting then you can just put your message in as the parameter to getMessage and it will return the text as entered if it doesn't find anything in the Messages table that matches. I prefer putting a more descriptive name instead of the actual text but either way works.

Controlling Reference Field drop-down order in ServiceNow

October 19, 2012, 2:02 pm - James Farrer

I just spent a bit of time trying to change the order for the options in a reference field that is displayed as a drop-down. I was just about to give up when I ran into an attribute that appears to be just for this scenario.

The options are sorted by the display value by default. In this case I needed to order by another field. It turns out the secret is to add the "ref_sequence" attribute.

So if you wanted to order by an "order" field, you would add "ref_sequence=order". Simple but obscure.

The Importance of Improving Site Flow

September 26, 2012, 11:02 pm - James Farrer

Over the last week I've been making some minor adjustments to my site here. Most of which you'd never know about because they are on the administrative side of things. After having thought numerous times, "I should make a link from here to there" I decided to make the changes. In a few places around the site I have added links and rearranged things to save a few clicks.

While this is a fairly minor thing from a code perspective, it makes a significant improvement in the flow of things while using the site. It has been a stark reminder of the importance of the little things in website development. That's not to say that all little things are important, but that some little things are far more important than many of the "really cool" complicated things in the background.

Proper placement after some good testing (preferably by real users in as close to real circumstances as possible) can go a long way in improving the flow through the site. And from my own experience I am reminded of how important that flow is in creating a positive experience on the site. Streamlining the common tasks to make them easier (fewer clicks) and more intuitive (less searching for the link/button/page/etc.) will go a long way to making other lives a bit easier.

On this little site, that doesn't mean much, but in the things I do for work, over time that can add up for the hundreds, if not thousands or more, users of the work that I do. And hey, taking away a little bit of stress and giving back a little bit of time can be a great gift. I know I appreciate it.

Building a Portal to IT in Colorado

May 4, 2012, 1:09 pm - James Farrer

Yesterday I presented information about how we've built a Portal to IT at BYU using ServiceNow at the Colorado Local User Group. It was similar to what I presented last year at the ServiceNow user conference, Knowledge11, in San Diego. Since the first presentation we did a major overhaul of our public facing site including a new menu system and many updates that address user feedback we've received. From what I can tell, both the website and the presentation have been fairly well received.

If you're interested in learning more about our CMS site and Service Catalog or if you missed the presentation it can be found here:

Building a Portal to IT (~6 MB PDF)

If you've got feedback, questions, or especially if you're willing to share some ideas about what has or has not worked for you, let me know. I'm also hoping to meet up with folks at the upcoming Knowledge12 conference to share ideas. Let me know if you're interested.

Dropbox and easy photo uploads to my website

April 13, 2012, 11:51 pm - James Farrer

So today I discovered that Dropbox has a Linux client. And that Linux client can be run from the command line. This is important since my servers don't have GUI's installed. This is very important since I've been struggling with easy file upload to my website for quite some time. This is important since I have well over 10,000 photos on my site and the number is steadily growing.

For the most part I've been the primary contributor. And for all practical purposes, the only contributor. I have a custom built site that I use for a number of things, one of which is to learn how to do stuff. As part of this I have built in the ability to do bulk photo uploads via a mapped network drive. This is great if you're on my home network where you can map the drive. If not, well, it starts to get messy real quick. You can upload photos one at a time, but that's just painful unless you only have one. And this is rarely the case for me or other family members that want to contribute.

So here's the nitty gritty of the idea I had. I can set up a shared folder in Dropbox with those people who want to do bulk photo uploads to my site. I put the Dropbox client on the server, which other than having to find a custom init script to keep the daemon running, was pretty simple. Viola! now my Dropbox files are on the server, including this shared folder for uploads.

The next step was to update my bulk photo import functionality in my website to look at the dropbox folder. I went the extra step to make it look at both the old folder and the new one to keep the existing functionality intact and then I had it. I was able to use pretty much the same code I already had and it's working pretty slick. The biggest snag was that I had to set a few folder permissions to make sure the server could get to what I wanted. Otherwise pretty simple

One of the next steps, now that Dropbox also has automatic photo upload from my phone, will be to set up a scheduled job that will monitor the Camera Uploads folder and automatically import them. Then I'll have roughly my own version of what Google+ does, which is super convenient. That will be a future step since I currently don't have the option for having private photos on my site and I don't want the pictures to be automatically public. Now that I have the hard part solved with Dropbox, that's just a matter of time before I can do all sorts of things like that.

If you haven't signed up for Dropbox yet and want to, you can by clicking on this link: http://db.tt/681mdFko. By doing so you'll give me some extra space for free and we both win.

RESTful JSON Web Services in ServiceNow - Part 2, How To

February 25, 2012, 10:57 am - James Farrer

This should explain the basic pieces needed to write a JSON web service in ServiceNow. The background leading up to this is contained in Part 1.

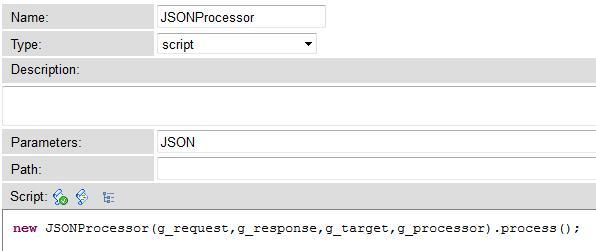

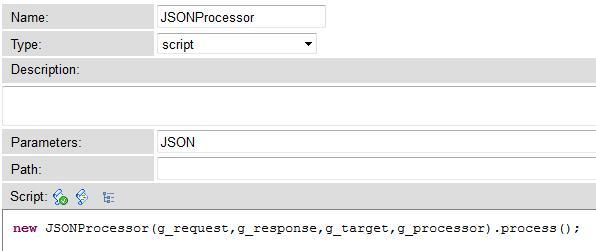

Processors

Processors in ServiceNow are an as yet relatively undocumented feature that is used in the system to provide different formats for using the data in tables. For example, for a JSON version of the data in a table you can tack on JSON as a parameter in the URL and it will use this Processor:

It takes the parameter as indicated in the Parameters field and passes it into a Script Include to do the heavy lifting.

The g_request, g_response, g_target, and g_processor variables that are passed in allow for obtaining input parameters to the Processor and assembling and outputing the response. A few options I used in my JSON web service are g_request.getParameter(), g_response.setContentType() and g_processor.writeOutput().

In order to set up the Processor the Type must be set to "script" and instead of using the Parameters field, fill in the Path. This will be the name in the URL, so if your Path has "super_cool_service" then you will access the Processor by going to "https://yourinstance.service-now.com/super_cool_service.do".

The rest of the magic happens in the script. You are most likely going to want parameters passed in. For what I did these were passed in the URL as standard GET parameters and I retrieved them with

g_request.getParameter("parameter_name")

In my case I needed to do an aggregate query on Incident data and return the summarized results. So I took the parameters, sanitized them, and used them to build the query.

Then it looped through the results and built a javascipt object with the data we wanted to send back.

There are a couple pieces of code that make it possible to return the results as a JSON web service. ServiceNow has a JSON class that allows translating to and from a JSON formatted string to a Javascript Object. I have used this in other places to parse JSON data from web services and here am doing the reverse to generate the JSON string from the object that was created.

g_response.setContentType("application/json");

var response = new JSON();

g_processor.writeOutput(response.encode(response_object));

The first line sets the HTTP content type so clients understand what format the data is coming back in. When I added this my JSON formatter browser plugin starting recognizing the content as JSON and formatting it in a bit more readable manner. The next line instantiates the JSON interpretter that is used in the last line to encode the object that was assembled. The last line also sends the resulting string back to the browser.

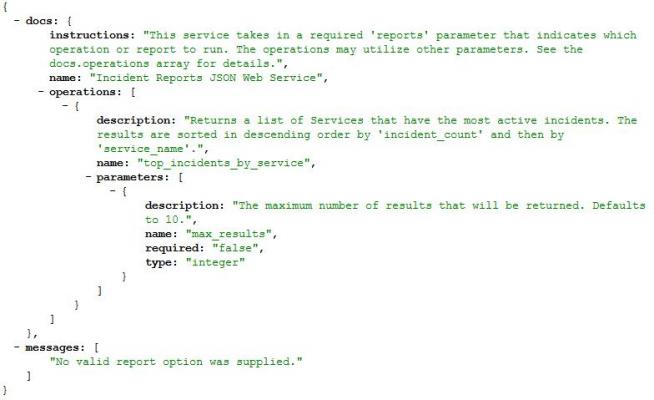

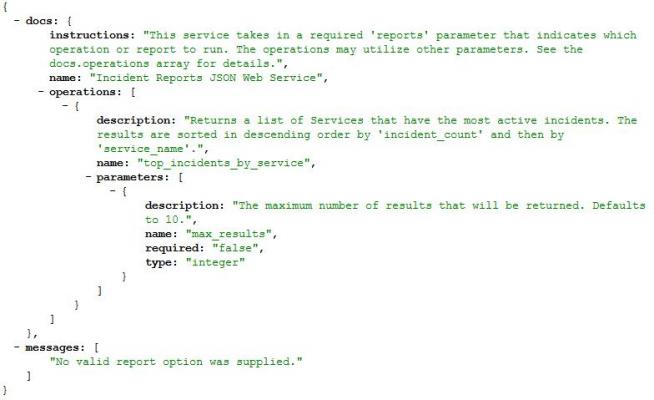

That's the meat of it. One of the things I did to make the service a little easier to consume was to make it self-documenting. That means that if you don't pass in the required parameters it returns an error message as well as the instructions for what inputs it expects. I've seen this in other services and have built on the idea and here's the result:

In my case I expect additional functionality to be added to this service so I set up the Operations array in the response to allow for more report options. This is the response if the service is called directly with no parameters.

Another point of note, we put the actual querying into a Script Include to allow for reuse. For example if we later need to make an HTML version of the report we can simply create a UI Page that calls the Script Include and format the returned object.

I'd be interested to hear any feedback on improvements or other options. This is advanced ground with little documentation but it promises to be incredibly flexible and useful.

RESTful JSON Web Services in ServiceNow - Part 1, The Background

February 25, 2012, 10:14 am - James Farrer

In my work at BYU I work on the Office of IT's website and internal tools that are powered by ServiceNow. This has been a good solution for us giving us more flexibility out of a purchased solution than I have ever seen. At BYU we are trying to build out a Service Oriented Architecture (SOA) and have been creating many web services to enable systems to talk to each other.

ServiceNow has been very powerful in enabling us to fully participate in SOA. We are one of the largest consumers of various web services on-campus. ServiceNow's native web services have made it possible for others to consume information from us as well. This is a win-win situation.

In the Office of IT we started with a preference for SOAP web services and have since migrated to RESTful JSON web services since they have generally turned out to be simpler to set up, understand, and consume. They are also significantly less verbose (if you've may it this far into this article then I'm guessing this isn't news to you).

Generally any set of data or table in ServiceNow is automatically accessible via several forms of web services. This has been great, but there are some cases where we need to do a little more heavy lifting than just basic querying, inserting, and updating. This is where Scripted Web Services come in. We've made a couple of these. The most notable of which provides others the ability to tie into an order process in the Service Catalog.

This has been a good tool, it is for SOAP web services and lets you easily take inputs, script the heavy lifting, and then return the outputs, but it was not designed to return multiple records and isn't our preferred way of building web services.

Then a few days ago I ran into a post by John Anderson, a ServiceNow integration specialist, on his blog about how to launch a scheduled job from a URL. That sounded an awful lot like a REST web service to me.

He used a Processor to do the job. These are essentially undocumented and advanced parts of ServiceNow. I had run into them a little bit at a ServiceNow conference but hadn't explored it much. When I saw John's post the lights started going on.

It was convenient that yesterday we also had a request for a web service to return some data that can't be easily obtained with the default web services.

One of my student employees and I took the head start John gave us and spent a combined 12 hours yesterday to learn about, build, document, and turn over to the users for validation a RESTful JSON web service. I'd say that was a successful day.

Now that I've written a whole bunch of history I think I'll split the how to into a separate post, Part 2.

SOPA and PIPA? What are they thinking!?!?!

January 18, 2012, 7:59 pm - James Farrer

If you have paid attention to the news over the last few days or if you have visited any one of the thousands of popular websites today including Google, Wikipedia, and many others, then you are probably at least vaguely familiar with the Stop Online Piracy Act (SOPA) and the Protect Intellectual Property Act (PIPA). These are similar bills that are being discussed in the House and Senate.

When I first heard about it I didn't think too much of it, but as I have discovered more details I have been very surprised that something with so much potential to limit free speech, innovation, and progress in our country could make it into the legislative process at all, let alone being up for a vote in the next few days. I've read quite a bit on it and am not surprised at all to see so many sites and people protesting this legislation. While am not opposed to protecting copyrighted works, this goes too far.

Here is probably the best analysis I've seen today of what the bills propose and what some of the ramifications for world and Internet are. Please read:

http://mashable.com/2012/01/17/sopa-dangerous-opinion/

Just the fact that I'm posting this on a website that I own and allow comments on this post would put me at risk of losing my site and a whole lot more (especially since I could be held liable for attorney's and other fees).

As near as I can tell, the technology industry is one of the fastest growing industries right now and I know of a large number of job openings in this industry. These bills, if passed, have significant potential to bypass due process of the law and stifle growth, innovation, and progress essentially reversing the growth trend if not completely destroying a significant part of the technology industry. If you think about it most companies need a website any more just to survive making the effects far more reaching than just the technology industry.

For a government that seems pretty focused on creating jobs in a slow economy this is definitely going the wrong direction.

Just the financial implications of having to censor content on websites the way these bills indicate would put most small technology startups out of business before they even got started.

The impact alone on technology businesses would likely negate any possible benefit that the music and film industry could hope to gain from something like this.

To sum things up: SOPA/PIPA = Bad Idea! Anyone that supports this has definitely lost my vote because they are clearly indicating that they are out of touch with the world and are not willing to think through the very things they are signing their name to.